A founder we work with forwarded an email from his CFO three days before a board meeting. Paraphrased: "before we approve the additional AI budget, I need engineering to give us a number for how accurate this thing actually is. 'It works well in our testing' is not going to fly."

The team had no number. They had a Notion page of test queries with green and red emoji next to each one. They had user satisfaction screenshots from a Slack channel. They had a confident product team. What they did not have was a single defensible accuracy metric they could put in a slide.

This is the conversation every RAG team has eventually. The right time to set up evaluation is before the launch, not three days before the board meeting. Here are the three metrics we ship with every project, what each one actually tells you, and why most teams skip them until it is too late.

Why "is it accurate" is the wrong question

"Accuracy" assumes there is a single right answer per question and the system either nails it or misses it. RAG systems fail in three structurally different ways, and lumping them together as "accuracy" hides the bug.

A RAG system can:

- Retrieve the wrong context but generate a confident answer anyway (hallucination from missing grounding).

- Retrieve the right context but generate an answer that contradicts it (hallucination from generation step).

- Retrieve the right context and generate the right answer (the working case).

A single accuracy number cannot distinguish between case 1 and case 2, but the fixes are completely different. Case 1 is a retrieval problem (better embeddings, better chunking, hybrid search). Case 2 is a generation problem (better prompt, larger model, faithfulness gate).

The Ragas framework decomposes RAG quality into separate metrics that each map to a specific failure mode. Three of them carry most of the weight.

Metric 1: Faithfulness

What it measures: does the generated answer make claims that are actually supported by the retrieved context?

How it is computed: an LLM-as-judge breaks the answer into atomic claims, then checks each claim against the context. The score is the fraction of claims that are grounded.

Why it matters: this is the metric that catches confident hallucinations. If faithfulness is below 0.85 in production, the system is making up facts and saying them with conviction. A user has no way to detect this from the answer alone.

What good looks like: above 0.90 for general-purpose assistants, above 0.95 for compliance-sensitive use cases (legal, medical, financial).

The Sapota playbook is to gate every production response on a real-time faithfulness check using a small model. If the score for a generated answer falls below the threshold, return a "I cannot find a confident answer for this in the knowledge base" message instead of shipping the hallucination to the user. This single gate cuts user-reported wrong answers by around 60% in the audits we have run.

Metric 2: Context Recall

What it measures: of the information needed to answer the question correctly, how much of it actually made it into the retrieved chunks?

How it is computed: for each ground-truth answer, an LLM-as-judge identifies the atomic facts in the answer, then checks how many of those facts are present in the retrieved context.

Why it matters: this is the metric that catches retrieval failures upstream of generation. A faithfulness score of 1.0 looks great, but if context recall is 0.4, the system is faithfully answering with only 40% of the relevant information. The answer is technically grounded but materially incomplete.

What good looks like: above 0.80 for most use cases. Below 0.70 means the retrieval layer is the bottleneck and no amount of prompt tuning will fix the underlying problem.

The teams that obsess over prompt engineering when context recall is low are sanding the paint on a car with no engine. Fix retrieval first. Hybrid search, better chunking, query expansion. Then come back to the prompt.

Metric 3: Answer Correctness

What it measures: how close the generated answer is to the ground-truth answer, weighing both factual overlap and semantic similarity.

How it is computed: combines a semantic similarity score (embedding cosine between generated and ground-truth answers) with an LLM-as-judge factual overlap check.

Why it matters: this is the closest metric to what stakeholders mean when they say "accuracy," but it requires a ground-truth answer set. The metric is meaningful only to the extent that the ground-truth set represents the actual production query distribution.

What good looks like: above 0.75 for production, with the caveat that the absolute number depends heavily on the eval set quality. Trends matter more than absolute values. A 5% week-over-week drop is the alert signal.

What you actually need to ship

The minimum viable evaluation setup, in order:

- A ground-truth eval set of 100 questions. Written by the engineers and product, then reviewed by a domain expert. Include the long-tail queries that came in during the alpha. If the system is multi-tenant, sample across tenants.

- A weekly cron that runs the eval set through the production pipeline and computes Faithfulness, Context Recall, and Answer Correctness using Ragas. We use OpenAI's gpt-4o-mini as the judge model for cost reasons. Total compute cost is under $5 per weekly run for a 100-question set.

- A dashboard with three lines (one per metric) tracking the scores week over week. Slack alert when any metric drops more than 5%.

- A real-time faithfulness gate in the production response path. Generate the answer, score it, return it only if faithfulness is above the threshold. This is where the user-perceived quality jump happens.

That is the full setup. There is no cloud LLMOps platform required. We have shipped this in two days for several Sapota projects using Ragas, a Postgres table, and a daily Github Actions cron.

What we sent the founder for the board meeting

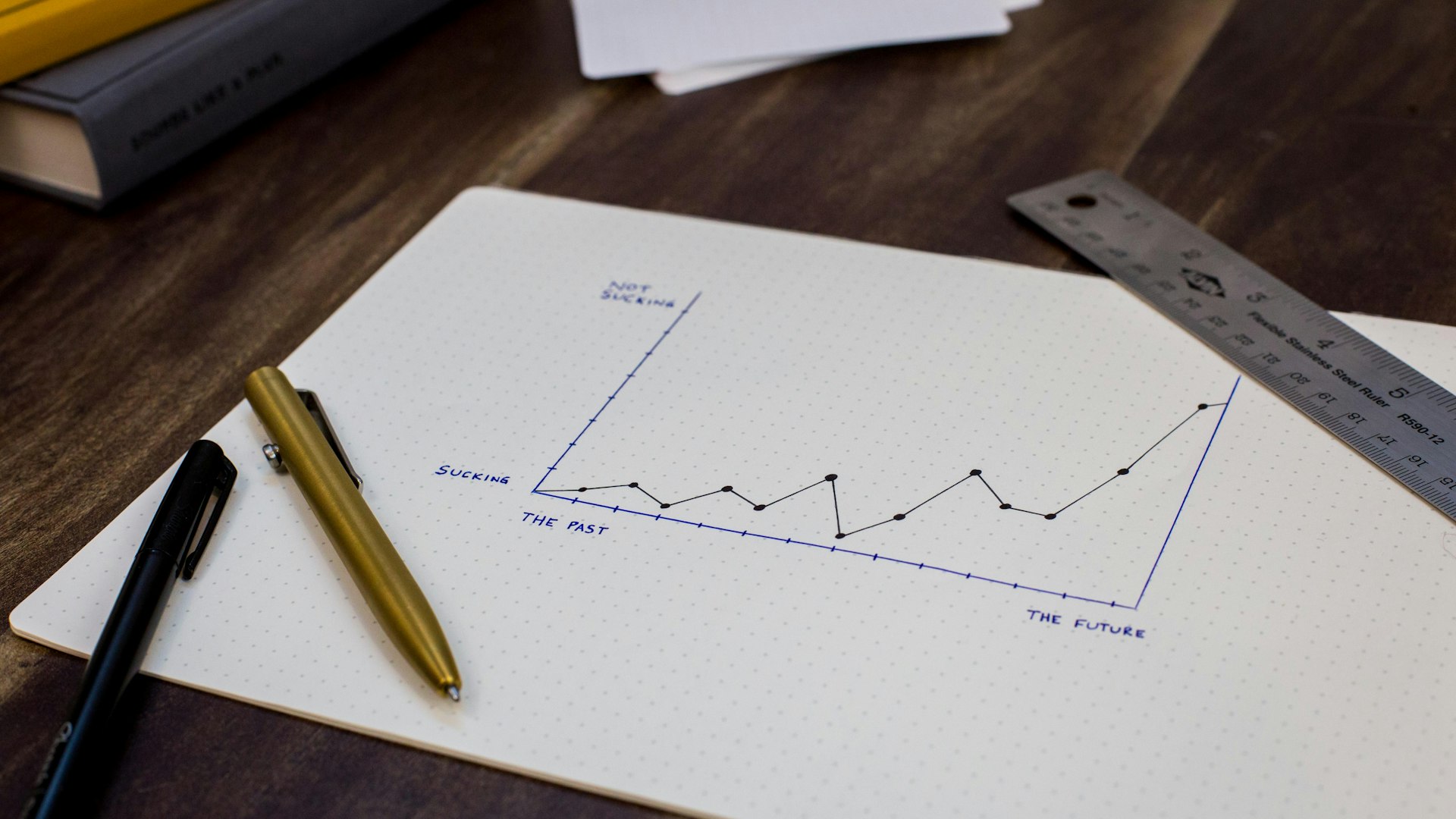

The diagnostic ran on Wednesday. Thursday morning the team had three numbers: Faithfulness 0.91, Context Recall 0.78, Answer Correctness 0.74. Friday's board deck included the chart and a one-paragraph note that Context Recall was the current bottleneck and the next sprint would close it via hybrid search and chunk-size tuning.

The CFO did not ask for a higher accuracy number. He asked when the next snapshot would be available. The conversation moved from "should we approve this" to "how do we measure progress." Which is the conversation engineering wanted in the first place.

A note on why teams skip this

Setting up Ragas takes a day. Picking the eval set takes a week. The reason teams skip it is that the eval set work is unglamorous, and there is always a feature that looks more important.

The teams that skip it pay the cost twice: once when production complaints start coming in and they cannot diagnose which layer is broken, and again when a stakeholder asks for a number and they cannot produce one.

Build the eval set before the launch. Even an imperfect 50-question set with rough ground-truth answers is enough to start measuring trends. You can refine it over the first month of production, when you actually see what users ask.

If you cannot answer the accuracy question

If your team is in the position of having a working RAG system and no defensible accuracy number, that is the gap a Sapota evaluation engagement closes. Two weeks, fixed scope, ships you the eval set, the Ragas pipeline, the dashboard, and the faithfulness gate as a working PR.

Reach out via the AI engineering page. Bring whatever ad-hoc test queries the team has been using. We start from there.